Can ChatGPT help with looking up assumption information?

Can ChatGPT help us with looking up assumption information?

Having to remember, retrieve, and explain assumptions is a common task (and, at least for me, an annoying one). It comes up all the time, both as part of a standard reporting process (e.g., looking up past assumptions for trend analysis) or as ad-hoc questions (e.g., going through M&A appraisals or the CIO asking about our asset default assumptions in the model).

I’m online enough to know that ChatGPT is still the craze. So can a language model models like ChatGPT help us by looking through our documentation for us and finding the relevant info?

Assumption retrieval test

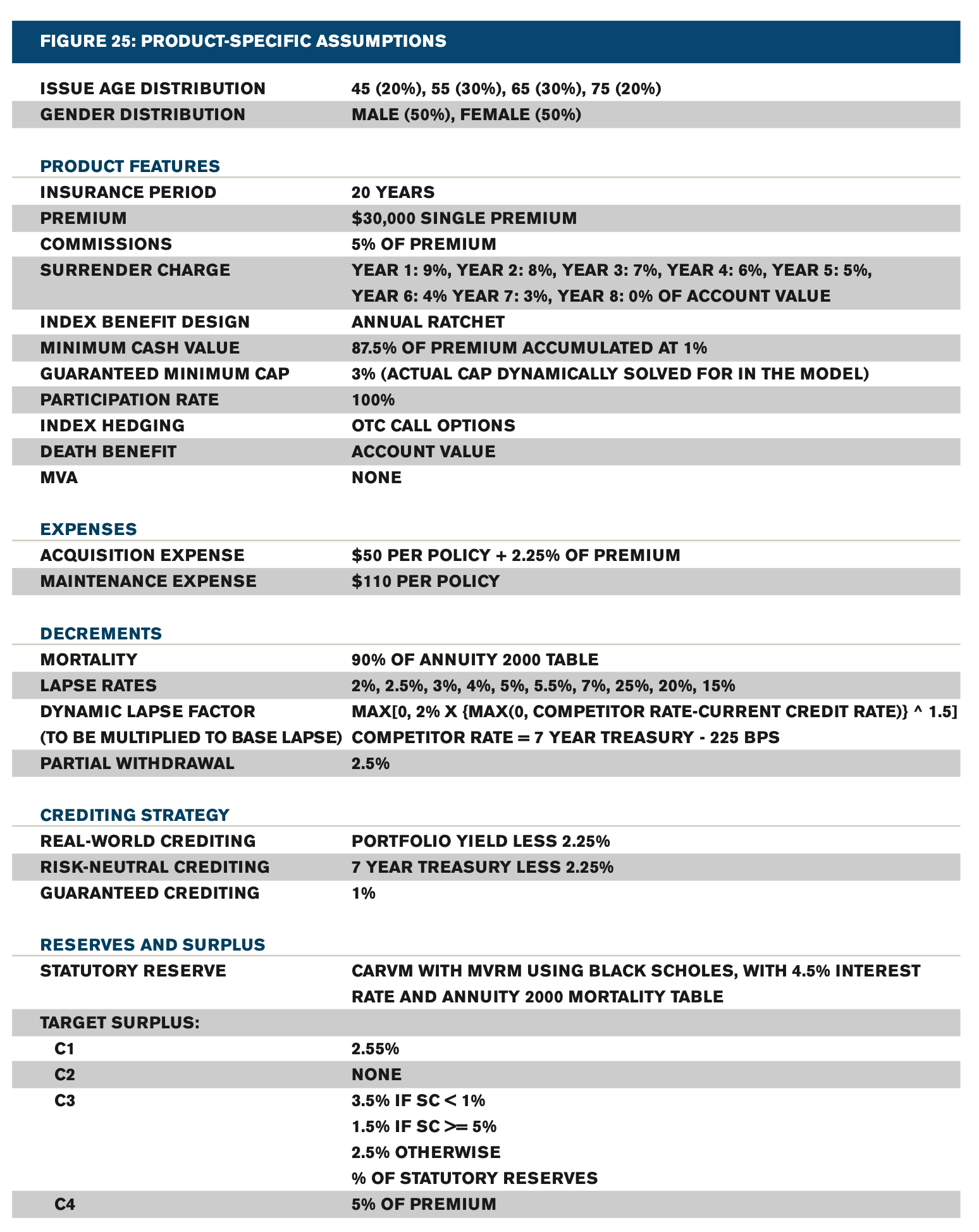

As a case study, let’s take some basic assumptions and give them to ChatGPT in text form. Then ask it plain text questions about those assumptions. It’s surprisingly hard to find super generic assumptions on google, but I did find sets of assumptions for a variety of products from a 2012 SOA report on pricing using MCEV, and I settled on the FIA (located on page 42, here: https://www.soa.org/globalassets/assets/files/research/projects/research-report-pricing-report.pdf)

If you don’t want to parse through that document, here’s what they came up with for FIA assumptions:

So I took those assumptions, got them into plain text and fed that to ChatGPT. Then I started asking questions.

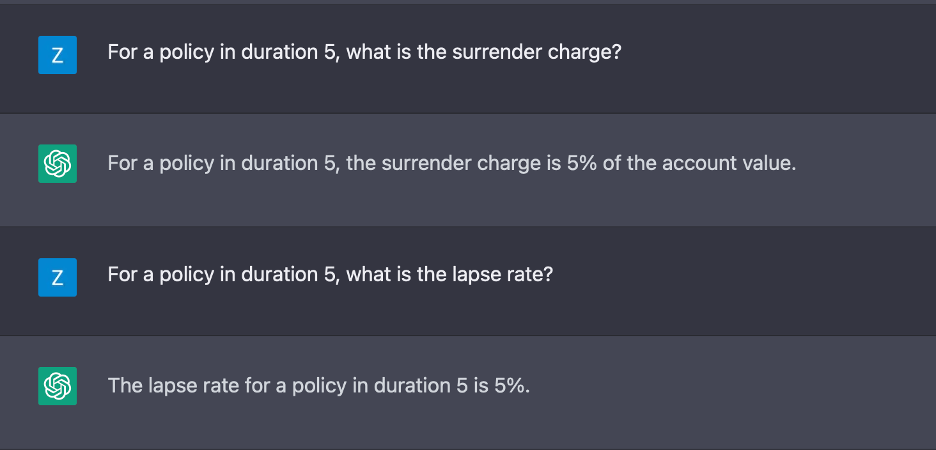

It gets our mortality questions correct. So far so good.

Let’s see if it can get a little more complicated to get specific values from the table:

Ok, it can look at the tables and correctly pull out the year 5 values. It’s doing pretty good so far.

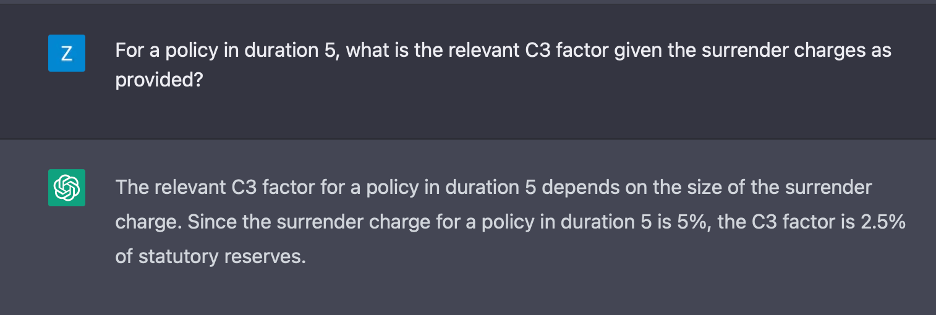

It’s done a good job on basic retrieval, so let’s take that piece of info that it can find and ask it to go a step further with it.

Well, they can’t all be winners. Based on the C3 values as provided, the correct value here would be 1.5%. I’m not great at arithmetic, but I’m confident 5% is >= 5%.

Retrieval assessment

ChatGPT does a good job of pulling specific information from text. It does a poor job doing basic math on that text, but that’s not entirely surprising considering it’s a language model, not a calculator.

Assumption comparison test

Let’s do one more exercise now. We’ve got some 2012 assumptions, let’s make up some 2022 assumptions and see how it handles changes to the assumptions.

I’m going to make it easy on myself and just mess with the decrements. Here is what I fed to ChatGPT for the second exercise:

Assumptions from 2012 Mortality 90% of annuity 2000 LAPSE RATES 2%, 2.5%, 3%, 4%, 5%, 5.5%, 7%, 25%, 20%, 15%Assumptions for 2022 Mortality 93% of IAM 2012 Lapse rates 1%, 1.5%, 3%, 4%, 4.5%, 5.5%, 7%, 35%, 10%, 10%

I updated the mortality table to IAM 2012 (ignoring the IAR 2012 improvements) and made the early lapses a little lower with a higher shock lapse. Now I’m going to see if ChatGPT sees the differences and understands how to distinguish between them.

It does a good job here distinguishing the 2012 assumptions from the 2022 assumptions and not mixing them up. Then it does a good job comparing the lapse rate changes between the two sets. Excellent stuff here, no notes!

Overall assessment

Based on these super basic tests, I would feel comfortable setting up a chatbot to do something like answering “what is the surrender charge schedule” or “what were the assumptions last year”

Where I not be comfortable is setting up a chatbot to answer questions like “if we changed the discount rate from 10% to 12% how would that affect the PVDE?” While that’s still a super simple Excel exercise for us actuaries, if ChatGPT isn’t sure if 5% >= 5%, I don’t trust anything mathematical coming out of it.

How to do this in practice:

I expect that this would look something like a bot in your Teams contacts that can answer these types of basic questions (and Microsoft is already making ChatGPT integrations into Teams).

The bot in your Teams contacts would have been trained on your documentation, by you having uploaded your documentation to your preferred provider of secure cloud storage.

Conclusions

ChatGPT and other natural language models are currently capable of retrieving basic information from documentation and memos, and could be used to support actuaries in tasks where they have to retrieve information from documentation, as long as that information does not require analysis.